|

This is because the CPU time (column labled CPU) only measures the time spent by the main thread, which is not helpful for the multi-threaded benchmarks.Įxecution time results for our four microbenchmarks can be found below. Execution time resultsįor each of the benchmarks we will be focusing on the wall clock time (column labled “Time”). │ _ZNSt6thread11_State_implINS_8_InvokerISt5tupleIJZ8diff_varvEUlvE0_EEEEE6_M_runEv():Īs is the case for the singleThread benchmark, all three of the multithreaded benchmarks spend most of their time on the atomic increment. Each thread simply runs a single inlined version of the work() function. The code for our multi-threaded benchmarks all look identical. If you have not compiled with -march=native, the incl (increment) instruction may be replaced with and addl instruction: 10: lock addl $0x1,(%rdx) The loop corresponds to only three instructions: 10: lock incl -0x4(%rsp) The following pieces of assembly were taken from perf reports, where the column labled “Percent” corresponds to where the profiler is saying our program is spending most time.įor our singleThread benchmark, four calls to the work() function are inlined, leading to four tight loops that do an atomic increment: Percent│Ġx186a0 translates to 100k decimal (the number of iterations in our work() loop.

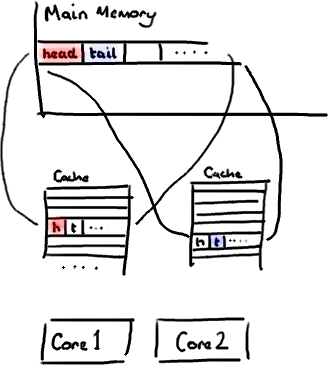

Void directSharing () BENCHMARK ( noSharing ) -> UseRealTime () -> Unit ( benchmark :: kMillisecond ) Our benchmarks at the assembly levelīefore we take a look at the results, it’s important to understand what our code is doing at the lowest level. Optimization Case StudyĬonsider the following simple function in C++ that we want to run 4 times: Let’s consider a simple optimization case-study where a well-intentioned programmer optimizes code without thinking about the underlying architectures. This can lead to performance that looks like multiple threads are fighting over the same data. When this happens, cores accessing seeming unrelated data still send invalidations to each other when performing writes. Where does false sharing come from?įalse sharing occurs when data from multiple threads that was not meant to be shared gets mapped to the same coherence block. To simplify our discussion, we will simply refer to the range of data for which a single coherence state is maintained as a coherence block (a page or cache-line/block in practice). Coarser-grained coherence (say at the page-level) can lead to the unnecessary invalidation of large pieces of memory. Finer-grained coherence (say at the byte-level) would require us to maintain a coherence state for each byte of memory in our caches. Cache-lines/blocks strike a good balance between control and overhead. Once the core trying to perform a write has gained exclusive access, it can perform its write operation.īut what exactly is getting invalidated? Typically, it is a cache-line/block. Access is taken away by invalidating copies of the data other cores have in their cache hierarchy.

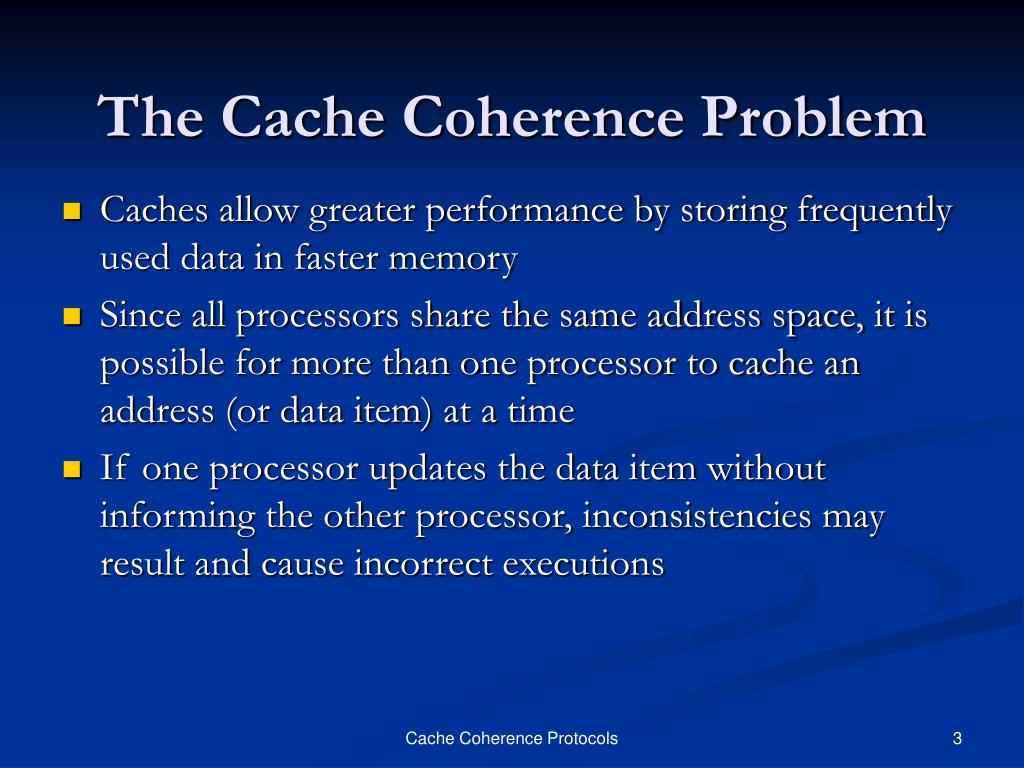

When one core wants to write to a memory location, access to that location must be taken away from other cores (to keep them from reading old/stale values). Invariant 1 shows why sharing read-only data is OK while sharing writable data can cause performance problems. Single-Writer, Multiple Reader Invariant: For a memory location A, at any given logical time, there exists only a single core that may write to A (and read from A), or some number of cores (maybe 0) that may only read A.ĭata-Value Invariant - The value at any given memory location A at the start of an epoch is the same as the value of the memory location at the end of its last read-write epoch. Cache CoherenceĬache coherence is often defined using two invariants, as taken from A Primer on Memory Consistency and Cache Coherence: The problem comes when multiple threads want to write to that piece of memory, and this goes back to cache coherence. Why is sharing data so bad? It’s not! If we have many threads that are only reading some shared pieces of memory, our performance shouldn’t suffer.

This tutorial was inspired by the following talk on performance by Timur Doumler:

The links below are to the source code used and video version of this tutorial: This tutorial provides a basic introduction to false sharing through some simple benchmarking and profiling. However, our data layout and architecture may introduce unintentional sharing, known as false sharing. In some circumstances, sharing is unavoidable. One of the most important considerations when writing parallel applications is how different threads or processes share data. The Performance Implications of False Sharing

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed